Tuesday, October 13, 2009

How to validate VMWare license ?

http://www.vmware.com/checklicense/ . Click validate and this your license will be validated.

This will bring the page with following message

Welcome to the license file checking utility. This tool will take a pasted license file and parse, reformat, and attempt to repair it. It will also give you statistics regarding the total number of licenses found and highlight inconsistencies that could potentially cause issues.

Currently, this utility only handles "server-based" license files (used with VirtualCenter). Host-based licenses that stand-alone on a single server are not supported at this time.

Saturday, October 10, 2009

Working with BMC and vif on FAS2020

We had a situation with FAS2020 where we had to connect two interface e0a/e0b has to be assigned two separate IP but when NetAPP engineer commissioned FAS2020 ,they created vif and assigned both the physical interface e0a and e0b to it.

This is how back panel of FAS2020 looks like

The circled one is basically for BMC which is similar to ILO

FAS2020 have two Ethernet interface .

So I had a challenge to delete vif for FAS2020. It’s like virtual interface binded to two physical NIC and filer view can be accessed using vif . So we cannot make changes to vif using filer view . This needs to be accomplish either using serial interface or BMC(Similar to ILO which provide console access)

Couple of facts about BMC which I learned (remember I am learning NetAPP)

1. BMC has IP address and you need to do SSH to the IP not telnet.

2. For user ID you need to use “naroot” and password will be that of root.

3. Once you login to BMC console then you can login to system console using root and then root password

This is how to execute the command

login as: naroot

naroot@xx.0.86's password:

=== OEMCLP v1.0.0 BMC v1.2 ===

bmc shell ->

bmc shell ->

bmc shell -> system console

Press ^G to enter BMC command shell

Data ONTAP (xxxfas001.xxx.net)

login: root

Password:

xxxfas001> Fri Oct 9 11:08:06 EST [console_login_mgr:info]: root logged in from console

xxxfas001> ifconfig /all

ifconfig: /all: no such interface

xxxfas001> ifconfig

usage: ifconfig [ -a | [ <interface>

[ [ alias | -alias ] [no_ddns] <address> ] [ up | down ]

[ netmask <mask> ] [ broadcast <address> ]

[ mtusize <size> ]

[ mediatype { tp | tp-fd | 100tx | 100tx-fd | 1000fx | 10g-sr | auto } ]

[ flowcontrol { none | receive | send | full } ]

[ trusted | untrusted ]

[ wins | -wins ]

[ [ partner { <address> | <interface> } ] | [ -partner ] ]

[ nfo | -nfo ] ]

xxxfas001> ifconfig -a

e0a: flags=948043<UP,BROADCAST,RUNNING,MULTICAST,TCPCKSUM> mtu 1500

ether 02:a0:98:11:64:24 (auto-1000t-fd-up) flowcontrol full

trunked svif01

e0b: flags=948043<UP,BROADCAST,RUNNING,MULTICAST,TCPCKSUM> mtu 1500

ether 02:a0:98:11:64:24 (auto-1000t-fd-up) flowcontrol full

trunked svif01

lo: flags=1948049<UP,LOOPBACK,RUNNING,MULTICAST,TCPCKSUM> mtu 9188

inet 127.0.0.1 netmask 0xff000000 broadcast 127.0.0.1

svif01: flags=948043<UP,BROADCAST,RUNNING,MULTICAST,TCPCKSUM> mtu 1500

inet xx.xxx.xx.xx netmask 0xffffff00 broadcast xx.xx.xx.255

ether 02:a0:98:11:64:24 (Enabled virtual interface)

xxxfas001> ifconfig svif01 down

xxxfas001> Fri Oct 9 11:10:23 EST [pvif.vifConfigDown:info]: svif01: Configured down

Fri Oct 9 11:10:23 EST [netif.linkInfo:info]: Ethernet e0b: Link configured down.

Fri Oct 9 11:10:23 EST [netif.linkInfo:info]: Ethernet e0a: Link configured down.

xxxfas001> Fri Oct 9 11:10:37 EST [nbt.nbns.registrationComplete:info]: NBT: All CIFS name registrations have completed for the local server.

xxxfas001> vif destory svif01

vif: Did not recognize option "destory".

Usage:

vif create [single|multi|lacp] <vif_name> -b [rr|mac|ip] [<interface_list>]

vif add <vif_name> <interface_list>

vif delete <vif_name> <interface_name>

vif destroy <vif_name>

vif {favor|nofavor} <interface>

vif status [<vif_name>]

vif stat <vif_name> [interval]

xxxfas001> vif desto4~3~ry svif01

xxxfas001> vif destroy svif01

xxxfas001> if config -a

if not found. Type '?' for a list of commands

xxxfas001> ifconfig -a

e0a: flags=108042<BROADCAST,RUNNING,MULTICAST,TCPCKSUM> mtu 1500

ether 00:a0:98:11:64:24 (auto-1000t-fd-cfg_down) flowcontrol full

e0b: flags=108042<BROADCAST,RUNNING,MULTICAST,TCPCKSUM> mtu 1500

ether 00:a0:98:11:64:25 (auto-1000t-fd-cfg_down) flowcontrol full

lo: flags=1948049<UP,LOOPBACK,RUNNING,MULTICAST,TCPCKSUM> mtu 9188

inet 127.0.0.1 netmask 0xff000000 broadcast 127.0.0.1

xxxfas001> ifconfig

usage: ifconfig [ -a | [ <interface>

[ [ alias | -alias ] [no_ddns] <address> ] [ up | down ]

[ netmask <mask> ] [ broadcast <address> ]

[ mtusize <size> ]

[ mediatype { tp | tp-fd | 100tx | 100tx-fd | 1000fx | 10g-sr | auto } ]

[ flowcontrol { none | receive | send | full } ]

[ trusted | untrusted ]

[ wins | -wins ]

[ [ partner { <address> | <interface> } ] | [ -partner ] ]

[ nfo | -nfo ] ]

xxxfas001> ifconfig e0a xx.xx.xx netmask 255.255.255.0

xxxfas001> Fri Oct 9 11:17:24 EST [netif.linkUp:info]: Ethernet e0a: Link up.

xxxfas001> ifconfig e0a xx.xx.xx.xx netmask 255.255.25Fri Oct 9 11:17:50 EST [nbt.nbns.registrationComplete:info]: NBT: All CIFS name registrations have completed for the local server.

5.0

xxxfas001> ifconfig e0a xx.xx.xx.xx netmask 255.255.2

xxxfas001> ifconfig -a

e0a: flags=948043<UP,BROADCAST,RUNNING,MULTICAST,TCPCKSUM> mtu 1500

inet xx.xx.xx.xx netmask 0xffffff00 broadcast xx.xx.xx.255

ether 00:a0:98:11:64:24 (auto-1000t-fd-up) flowcontrol full

e0b: flags=108042<BROADCAST,RUNNING,MULTICAST,TCPCKSUM> mtu 1500

ether 00:a0:98:11:64:25 (auto-1000t-fd-cfg_down) flowcontrol full

lo: flags=1948049<UP,LOOPBACK,RUNNING,MULTICAST,TCPCKSUM> mtu 9188

inet 127.0.0.1 netmask 0xff000000 broadcast 127.0.0.1

xxxfas001> Fri Oct 9 11:20:03 EST [nbt.nbns.registrationComplete:info]: NBT: All CIFS name registrations have completed for the local server.

Fri Oct 9 11:21:16 EST [netif.linkInfo:info]: Ethernet e0b: Link configured down.

Fri Oct 9 11:21:29 EST [netif.linkUp:info]: Ethernet e0b: Link up.

Here you can find after changes how the ISCSI connection has been established.

Fri Oct 9 11:21:38 EST [iscsi.notice:notice]: ISCSI: New session from initiator iqn.2000-04.com.qlogic:qle4062c.lfc0908h84979.2 at IP addr 192.168.0.2

Fri Oct 9 11:21:38 EST [iscsi.notice:notice]: ISCSI: New session from initiator iqn.2000-04.com.qlogic:qle4062c.lfc0908h84979.2 at IP addr 192.168.0.2

Fri Oct 9 11:22:16 EST [nbt.nbns.registrationComplete:info]: NBT: All CIFS name registrations have completed for the local server.

FAS2020: The size must be a simple number like '3' or '512'

I was trying to create 1.3 TB of ISCSI lun on FAS2020 NetApp filer. It was throwing below error message. Looks like it has different version of DataONTAP running.

So what I did is I created 1TB of LUN without .0 so like 1TB. I then got below mention successful message.

I then supply the size as 1099529453568*1.3=1429388289639

When I recheck the lun size 1.3 TB.

Wednesday, September 30, 2009

Few Question about ballooning and config max.

One of the guy asked me following question . Just wanted to share with you all

1) what is ballooning in VMWARE

Ballooning

When the ESX host’s machine memory is scarce or when a VM hits a Limit, The kernel needs to reclaim memory and prefers ballooning over swapping. The balloon driver is installed inside the guest OS as part of the VMware Tools installation and is also known as the vmmemctl driver.

When the ESX kernel wants to reclaim memory, it instructs the balloon driver to inflate. The balloon driver then requests memory from the guest OS. When there is enough memory available, the guest OS will return memory from its “free” list. When there isn’t enough memory, the guest OS will have to use its own memory management techniques to decide which particular pages to reclaim and if necessary page them out to its swap- or page-file.

In the background, the ESX kernel frees up the machine memory page that corresponds to the physical machine memory page allocated to the balloon driver. When there is enough memory reclaimed, the balloon driver will deflate after some time returning physical memory pages to the guest OS again.

This process will also decrease the Host Memory Usage parameter

Ballooning is only effective it the guest has available space in its swap- or page-file, because used memory pages need to be swapped out in order to allocated the page to the balloon driver. Ballooning can lead to high guest memory swapping. This is guest OS swapping inside the VM and is not to be confused with ESX host swapping, which I will discuss later on.

To view balloon activity we use the esxtop uitility again from the COS (see below). From the COS, issue the command “esxtop” en then press “m” to display the memory statistics page. Now press “f” and then “i” to show the vmmemctl (ballooning) columns.

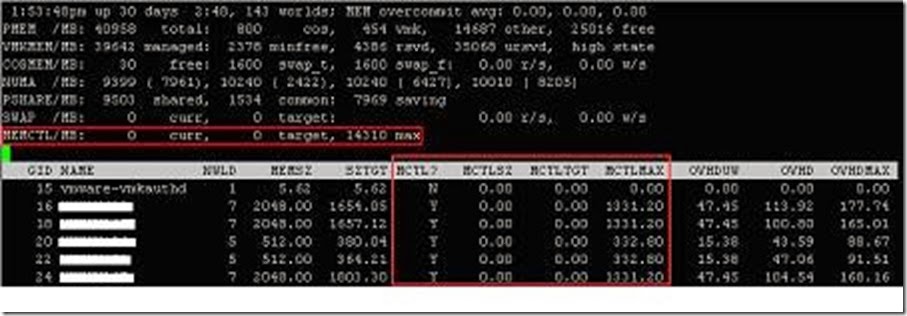

On the top we see the “MEMCTL” counter which shows us the overall ballooning activity. The “curr” and “target” values are the accumulated values of the “MCTLSZ” and “MCTLTGT” as described below. We have to look for the “MCTL” columns to view ballooning activity on a per VM basis:

● “MCTL?”: indicates if the balloon driver is active “Y” or not “N”

● “MCTLSZ”: the amount (in MB) of guest physical memory that is actually reclaimed by the balloon driver

● “MCTLTGT”: the amount (in MB) of guest physical memory that is going to be reclaimed (targetted memory). If this counter is greater than “MCTLSZ”, the balloon driver inflates causing more memory to be reclaimed. If “MCTLTGT” is less than “MCTLSZ”, then the balloon will deflate. This deflating process runs slowly unless the guest requests memory.

● “MCTLMAX”: the maximum amount of guest physical memory that the balloon driver can reclaim. Default is 65% of assigned memory.

You can limit the maximum balloon size by specifying the “sched.mem.maxmemctl” parameter in the .vmx file of the VM. This value must be in MB.

2) what is the limitation of Physical Memory of VMWARE / ESX Server

3) what is the limitation of Physical CPU of VMWARE / ESX server

Hardware Processors

Table 2-1 displays the number of physical processors supported per ESX Server host.

| Maximum Sockets | Maximum Cores | Maximum Threads | ||

| With hyperthreading | 16 | 16 | 32 | |

| Without hyperthreading | 16 | 16 | 16 | |

| With hyperthreading | 8 | 16 | 32 | |

| Without hyperthreading | 16 | 32 | 32 |

| • | A total of 128 virtual processors in all virtual machines per ESX Server host |

| • | 64GB of RAM per ESX Server system |

4) What is the Limitation of number of VMs in VMware per ESX server: 128

Error during the configuration of the host: Failed to update disk partition information

I was getting following error when I was trying to create VMFS datastore on ESX host.

Some background:

1. Lun was created on FAS3050 filer.

2. Lun were mapped using S/W ISCSI.

3. Lun were visible under storage adapter.

When I tried creating datastore I was getting error

I found following document on VMware site which tells how to fix the error message. In nut shell I did this

1. Run fdisk -l. This will give you a list of all of your current partitions. This is important because they are numbered. If you are using SCSI you should see that all partitions start with /dev/sda# where # is a number from 1 to whatever. Remember this list of number as you are going to be adding at least one more and will have to refer to the new partition by it's number.

2. Run fdisk /dev/sda. This will allow you to create a partition on the the first drive. If you have more than one SCSI drive (usually the case with more than one RAID container) then you will have to type the letter value for the device you wish to create the partition on (sdb, sdc, and so on).

3. You are now in the fdisk program. If you get confused type "m" for menu. This will list all of your options. There are a lot of them. You will be ignoring most of them.

4. Type "n". This will create a new partition. It will ask you for the starting cylinder. Unless you have a very good reason hit "enter" for default. The program will now offer you a second option that says ending cylinder. If you press enter you will select the rest of the space. In most cases this is what you want.

5. Once you have selected start and end cylinder you should get a success message. Now you must set the partition type or it's ID. This is option "t" on the menu.

6. Type "t". It will ask you for partition number. This is where that first fdisk is useful. You need to know what the new partition number is. It will be one more than the last number on fdisk. Type this number in.

7. You will now be prompted for the hex code for the partition type. You can also type "L" for a list of codes. The code you want is "fb". So type "fb" in the space. This will return that the partition has been changed to fb (unknown). That is what you want.

8. Now that you have configured everything you want to save it. To do so choose the "w" option to write the table to disk and exit.

9. Because the drive is being used by the console OS you will probably get an error that says "WARNING: Re-reading the partition table failed with error 16: device or resource busy." This is normal. You will need to reboot the ESX host

10. Once you have rebooted you can now format the partition VMFS. DO NOT do this from the GUI. You must once again log into the console or remote in through putty.

11. Once you have su'd to root you must type in

"vmkfstool -C vmfs3 /vmfs/device/disks/vmhba0:0:0:#"

Were # is the number of the new partition. You shoulder now get a "successfully created new volume" message. If you get an error you probably chose the wrong partition. Do an fdisk - l and choose the number with the "unknown" partition type. Note: IF you have more than one SCSI disk or more than one container the first 0 may need to be a 1 as well.

Problem which I faced:

When I start following last step "vmkfstool -C vmfs3 /vmfs/device/disks/vmhba0:0:0:#"

I was getting error

“Error: Invalid handle”

I was wondering what I was doing wrong. One discussion on VMTM suggested to do the following :

Run the command mkfs.ext3, so I ran the command and got following outout

It basically sits at some inode and never move forward. Host was in hung state. So I decided to check the host by using ILO and found PSOD.

I rebooted and tried the above steps again but still same PSOD.

Finally I logged into filer and found that the volume presented by SAN admin was replicated volume . This volume was snap mirror copy of protected site used for SRM.

Crap ☺ ……

Not sure why he have mapped SRM replicated volume to recovery site.