We have trying to implement SRM and I setup NetAPP 7.3 simulator on Ubantu . During the setup Netapp assign only 3 disk of size .

These agrr is intall by default when simulator is installed. These are 120MB*3 disk. Make sure when the installation is completed you add extra disk to the aggr or else you will land up situation which I have stated above.

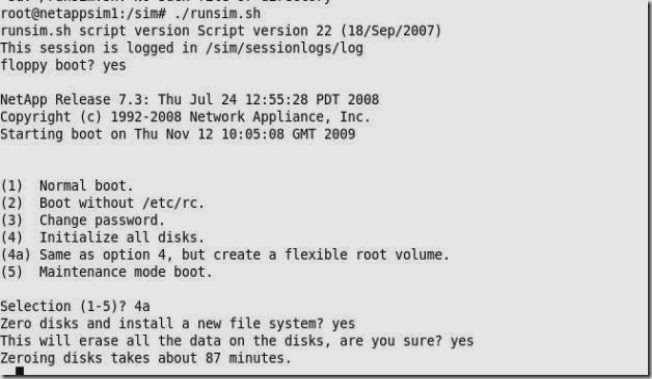

When you get above error boot the system into maintenance mode and then create a new root volume. To get into maintenace mode re-run the setup and then “floppy boot” to Yes. And also 4a will intialize all the disks and you will loose data.

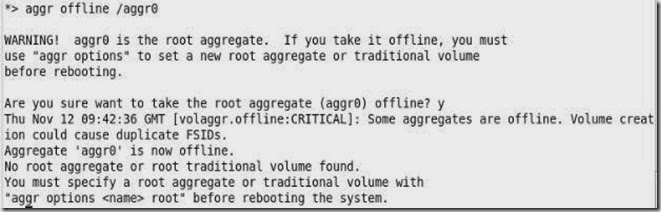

I have booted the system into maintenance mode and set the aggr0 (root volume) offline

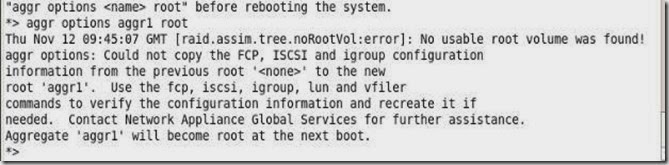

Now create the new root volume with following command

Reboot the system and run ./runsim.sh . If you have followed all the steps then you should be able to get sim online. I got following error message as I did not followed 4a thing.

root@netappsim1:/sim# ./runsim.sh

runsim.sh script version Script version 22 (18/Sep/2007)

This session is logged in /sim/sessionlogs/log

NetApp Release 7.3: Thu Jul 24 12:55:28 PDT 2008

Copyright (c) 1992-2008 Network Appliance, Inc.

Starting boot on Thu Nov 12 14:30:07 GMT 2009

Thu Nov 12 14:30:15 GMT [fmmb.current.lock.disk:info]: Disk v4.18 is a local HA mailbox disk.

Thu Nov 12 14:30:15 GMT [fmmb.current.lock.disk:info]: Disk v4.17 is a local HA mailbox disk.

Thu Nov 12 14:30:15 GMT [fmmb.instStat.change:info]: normal mailbox instance on local side.

Thu Nov 12 14:30:16 GMT [raid.vol.replay.nvram:info]: Performing raid replay on volume(s)

Restoring parity from NVRAM

Thu Nov 12 14:30:16 GMT [raid.cksum.replay.summary:info]: Replayed 0 checksum blocks.

Thu Nov 12 14:30:16 GMT [raid.stripe.replay.summary:info]: Replayed 0 stripes.

Replaying WAFL log

.........

Thu Nov 12 14:30:20 GMT [rc:notice]: The system was down for 542 seconds

Thu Nov 12 14:30:20 GMT [javavm.javaDisabled:warning]: Java disabled: Missing /etc/java/rt131.jar.

Thu Nov 12 14:30:20 GMT [dfu.firmwareUpToDate:info]: Firmware is up-to-date on all disk drives

Thu Nov 12 14:30:20 GMT [sfu.firmwareUpToDate:info]: Firmware is up-to-date on all disk shelves.

Thu Nov 12 14:30:21 GMT [netif.linkUp:info]: Ethernet ns0: Link up.

Thu Nov 12 14:30:21 GMT [rc:info]: relog syslog Thu Nov 12 13:50:59 GMT [sysconfig.sysconfigtab.openFailed:notice]: sysconfig: table of valid configurations (/etc/sys

Thu Nov 12 14:30:21 GMT [rc:info]: relog syslog Thu Nov 12 14:00:00 GMT [kern.uptime.filer:info]: 2:00pm up 2:09 0 NFS ops, 0 CIFS ops, 0 HTTP ops, 0 FCP ops, 0 iS

Thu Nov 12 14:30:21 GMT [httpd.servlet.jvm.down:warning]: Java Virtual Machine is inaccessible. FilerView cannot start until you resolve this problem.

Thu Nov 12 14:30:21 GMT [sysconfig.sysconfigtab.openFailed:notice]: sysconfig: table of valid configurations (/etc/sysconfigtab) is missing.

Thu Nov 12 14:30:21 GMT [snmp.agent.msg.access.denied:warning]: Permission denied for SNMPv3 requests from root. Reason: Password is too short (SNMPv3 requires at least 8 characters).

Thu Nov 12 14:30:22 GMT [mgr.boot.disk_done:info]: NetApp Release 7.3 boot complete. Last disk update written at Thu Nov 12 14:21:08 GMT 2009

Thu Nov 12 14:30:22 GMT [mgr.boot.reason_ok:notice]: System rebooted after power-on.

Thu Nov 12 14:30:22 GMT [perf.archive.start:info]: Performance archiver started. Sampling 20 objects and 187 counters.

Check if jave is disabled

filer> java

Java is not enabled.

If java is not enabled then FilerView wont work you need to re-install the simulator image.

I will explain about simulator reinstall using NFS /CFS once I tested it. Keep following my blog