If a pool of servers is created, and NIC 0+1 is bonded with the management interface running on this bonded network. All configuration steps were done from XenCenter

Problem :A new XenServer is added to the pool, the NICs are un-bonded before adding it to the pool (but the same problem exists even if they are bonded).The new XenServer is added to the pool and it picks up the pool network configuration however the Bond0+1network shows as unknown rather than connected.

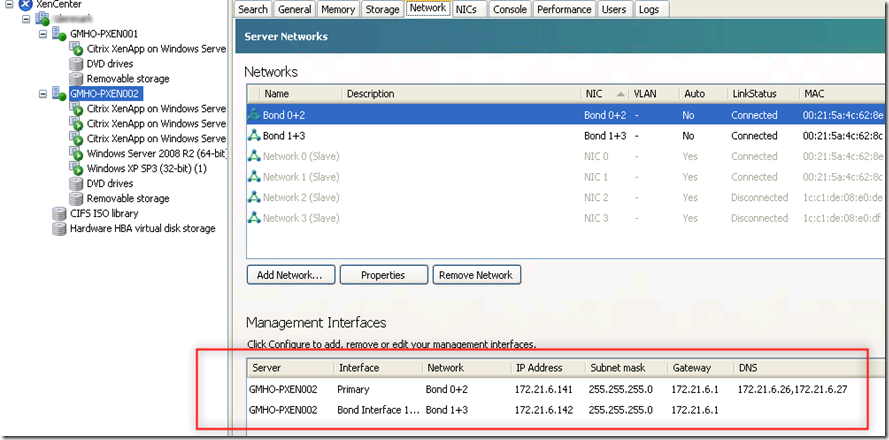

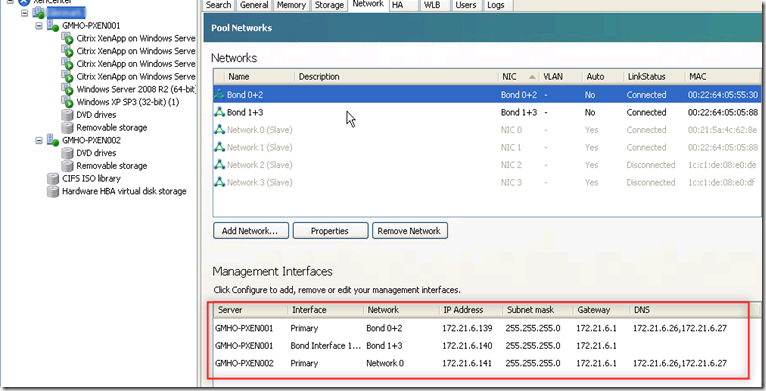

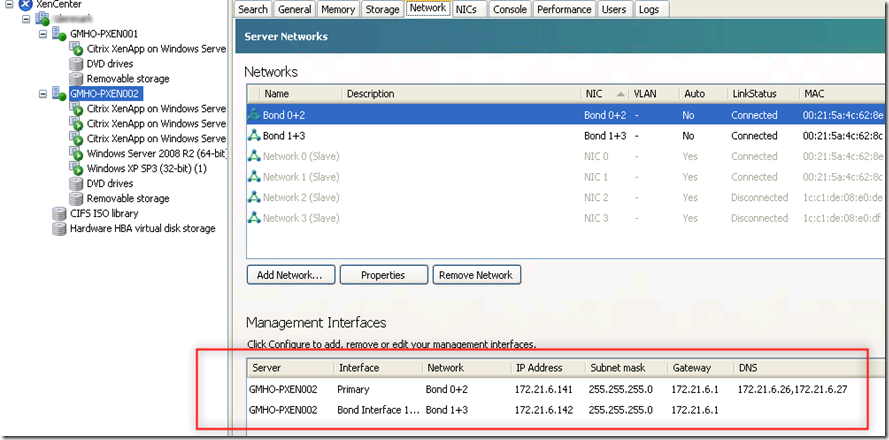

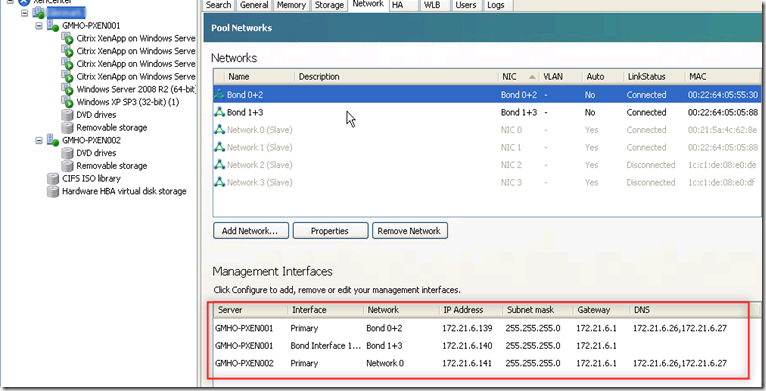

Below host GMHO-PXEN002 host has been added into resource pool and under “management interfaces” you can see it as “Network”

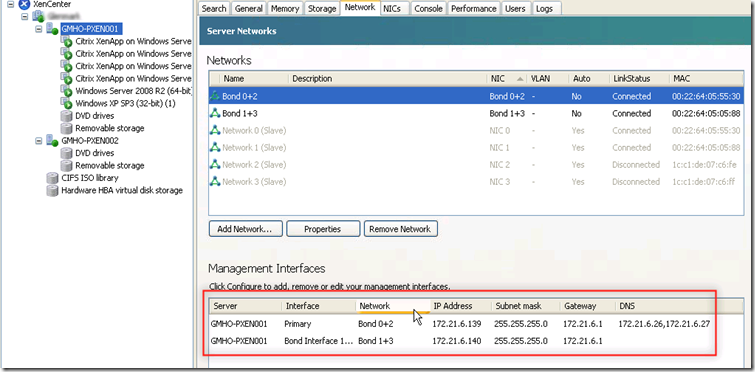

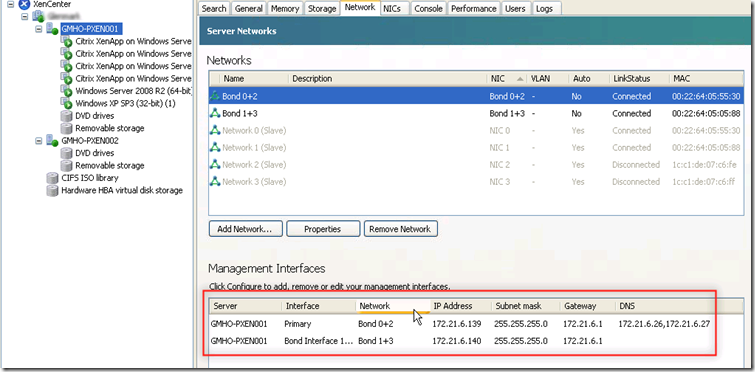

But for host GMHO-PXEN001 both the “Management Interfaces” are clearly visible with correct bond

At the pool level only three “Management Interfaces ” are visible.

I then checked the file under /proc/net/bonding on host 001 and found that there are two bond file as per two bond existence

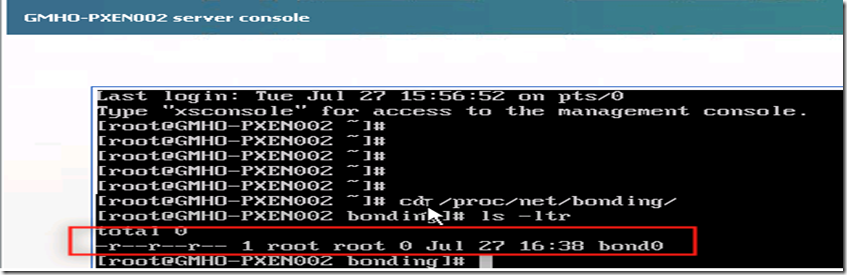

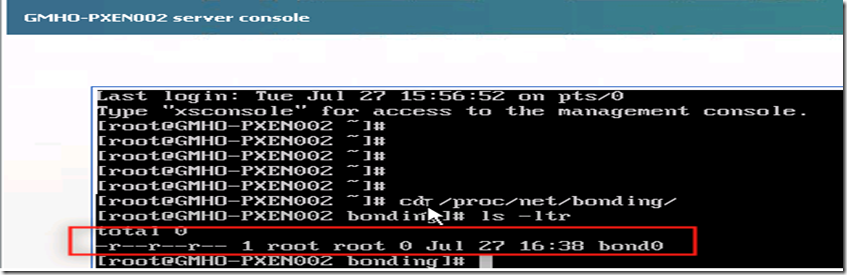

I then checked the file under host 002 and found that only one file is there though there are two bonds

Resolution

Remove the Bond0+1 network from the pool (which obviously affects all servers) and create the bond 0+1 again. Now this bond shows as connected on all XenServers.

To cross check I checked the file under host 002 and found that there are two files now

At the host level also it is shown correctly