Yes I am back after a long time and the obvious reason. I wanted to share something , I was browsing my gmail and guess what happened. Google was running on VMware virtual machine . You don’t believe it ? See below ..

Thursday, February 11, 2010

Saturday, January 9, 2010

I lost My Dear Friend on 8th Jan

On 8th of Jan 2010 at 8AM in the morning I got a call from my friend and conversation goes like this

Vinod: Hey Vikash I am Vasu friend do you remember?

Vikash : Of course I do.

Vinod : I have some bad news to share… Vasu is no more ?

Vikash: Hey are you serious ? Tell me you are joking?

Vinod: No it happened early morning.

In this tragic accident we lost three friend and 2 were injured.

We had spend good time of ours together but now it end something like this

Oh heart, if one should say to you that the soul perishes like the body, answer that the flower withers, but the seed remains. GOD will provide all the helping hand to your family and will give them strength to overcome this tragic moment.

This was the last conversation happened with me over GTALK and I missed calling him over 9902622777

Wednesday, December 16, 2009

The Parent Virtual Disk has been modified since child was created for VMware Workstation

HOW TO for Windows and VMware Workstation:

Using this article as a baseline, instead of "grep" use this CMD command: find "CID" "x:\folder\base-file.vmdk"

Use CTRL+C to abort the listing after the first few lines.

Take note of the CID=xxxxxxxx

If not already done so, download and install HEX EDIT FREE (I used v.3.0f)

NOTE: Since the vmdk-files can contain both CID and the whole disk file, they are both fragile AND HUGE! Take special caution when editing these files. A backup copy should always be taken and made write protected!!

This is also the reason why you need a special editor (like HEX edit) to edit the file the right way.

When ready, start hex edit and open the first snapshot file (ex. base-file-000001.vmdk)

Find the "parent=" string in the ascii section of the editor and place the cursor in front of the first letter. Start typing the CID from the base-file.

If prompted, say YES to disable write protection BUT NO to disable Replace mode. This is important to keep the file exactly the way it was, only changing the CID value.

Repeat/Apply this process to other . vmdk files in your chain, if other snapshot files in the middle of the chain has been opened.

Good luck people with all your restores.

And PLEASE VMware, make your programs start doing a check on this, when mounting a new hard drive file! ;)

Monday, December 14, 2009

Deep Dive Into SCSI Reservation

First I will talk about the problem and then will briefly discuss about SCSI reservation. Some of the contents are from support personal. This is just to spread knowledge and has nothing to do with credit.

Problem : We had 5 ESX host cluster running 3.5 U4 on DL380 G5 with 32 GB of memory with 8 logical CPU.

Suddenly we realize that 3 of the ESX host were disconnected from Virtual Center. I restart “ mgmt-vmware ” service but no luck. I then disconnected the ESX from VC and also removed it but still we were not able to add the host. Finally I decided to login to ESX using VC client and it failed. That is the time we realize there is some serious issue.

I then tried to CD /vmfs/volumes and it was giving me message

I checked all the ESX host and each one has same issue. I can ‘cd’ into any directory but not vmfs. This confirm that there is something happened with vmfs volume.

I ran qlogic utility “iscli” and tried to perform HBA level troubleshooting. HBA does report issue

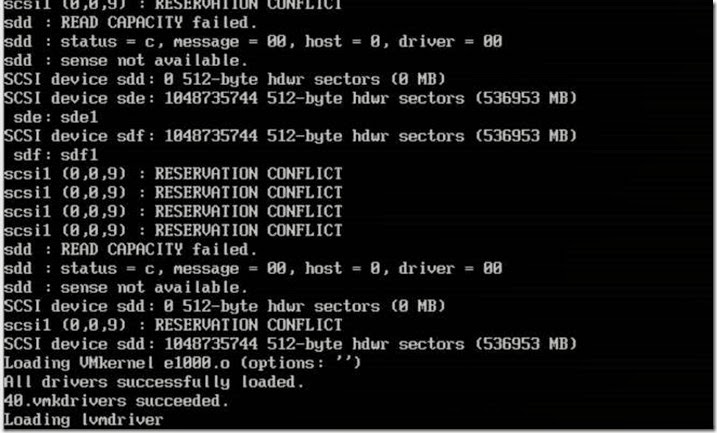

I checked vmkernal log which made me very suspicious . It had lots of SCSI reservation related issue. All the 3 ESX host had similar issue .

Since it being production scenario, I decided not to waste time and contact VMware for possible solution. After having webex with VMware , VMware passed following recommendation to break fix this issue

- put each ESX into maintenance mode (only 1 at a time)

- allow vm's to be migrated off

- reboot esx

- repeat procedure for each esx.

OR

- Failover the active storage processor (Contact SAN vendor for assistance with this)

Well I can not put ESX into ‘MM’ since all the ESX is sharing the lun. Though only 3 has reported but other two ESX host are also not able to see the lun. I checked with Storage admin for possible fail over and he decline since Filer is also used by other application. Finally I decided to reboot the esx host which was shortcut to fix the issue with maximum outage . After rebooting ESX host I saw following messages into the console

After rebooting ESX host it was not able to come back online. One the ESX host came online after waiting for 1 hour. Once it appeared in VC I found that none of the lun were mounted. I ran rescan and without any success. I did ran HBA level troubleshooting and found that I was able to reach filer IP from HBA bios. I then found that target IP were of vswif (Virtual interface on NetAPP filer to all physical NIC are tied for fault tolerance and redundancy ). Just for testing I added physical IP of HBA of the target filer and ran rescan . I was able to all the VM’s .

I checked with Network folks and they confirmed that there were routing issue between vswif network and ISCSI network. They are on two different VLAN (Not very sure why?)

I then but rest of the host into recovery mode and disable HBA. After it came online fast and I then added correct target IP .

Thus we fix this issue.

So now the question why it happened ?

SCSI reservations are used during the snapshot process, it seems there is a stale scsi reservation on the storage / hba which can cause snapshot to fail & performance problems.

So what is SCSI Reservation ?

ESX uses a mechanism of "locking" called "scsi reservation" to share luns between ESX hosts. These "reservations" are non-persistent and are released when they require activity is completed. The Service Console regularly monitors the luns and checks for an "reservations" that have aged to old. The ESX host will then try releasing the lock. If however another application running from the Service Console is using the lun, it can immediately reclaim the "lun" or place another "reservation". Thus, if 3rd party applications are not design to release their locks, we see a continuous flood of heartbeat reclaiming events in the logs.

SCSI reservations are needed to prevent any data corruption in environment where LUNs are shared between many hosts.

Every time a host tries to update the VMFS metadata it needs to put SCSI reservation on it.

When 2 hosts try to reserve the same LUN at the same time a scsi reservation conflict occurs, if the number of reservation conflicts is to big the ESX will fail the I/O.

SCSI reservations, status busy messages and Windows symmpi errors can be a sign of SAN latency or failure to release scsi file locks when requested. The SAN Storage Processors can hold the reservation which requires a lun reset or a SP reboot.

VMware ESX 3.0.x and 3.5 use " Exclusive Logical Unit Reservations" (SCSI-2 Style Reservations). These reservations are used whenever VMkernel applies changes to the VMFS metadata. This prevents multiple hosts from concurrently writing to the metadata and is the process used to prevent VMFS corruption. After the Metadata update is done, the reservation is released by vmkernel.

Examples of VMFS operations that require metadata updates are:

* Creating or deleting a VMFS data store

* Expanding a VMFS data store onto additional extents

* Powering on or off a VM

* Acquiring or releasing a lock on a file

* Creating or deleting a file

* Creating a template

* Deploying a VM from a template

* Creating a new VM

* Migrating a VM with VMotion

* Growing a file (e.g. a Snapshot file or a thin provisioned Virtual Disk)

When the VMkernel requests a SCSI Reservation of a LUN from the storage array, it attempts the metadata updates of the VMFS volume on the LUN. If the LUN is still reserved by another host and the reservation has not been granted to this host yet, the I/O fails due to reservation conflict and the VMkernel retries the command up to 80 times. The VMkernel log shows the “Conflict Retries” (CR) count remaining which counts down to zero. The retry interval is randomized between hosts to prevent concurrent retries on the same LUN from different hosts which improves the chances of acquiring a reservation.

If the number of retries have been exhausted, the I/O fails due to too many SCSI Reservation conflicts.

Areas of scsi reservation contention

-- 3rd party software (agents)

-- firmware on SAN SP/HBA

-- pathing

-- Heavy I/O

Therefore the way to mitigate the negative impact of scsi reservations is to try:

1. Try to serialize the operations of the shared LUNs, if possible do not run operations on different hosts that require scsi reservation at the same time.

2. Increase the number of LUNs and try to limit the number of ESX hosts accessing the same LUN.

3. Avoid using snapshots as this causes a lot of scsi reservations.

4. Do not schedule backups ( vcb or console based ) in parallel from the same LUN.

5. Try to limit the number of VMs per lun. What targets are being used to access luns.

6. Check if you have the latest HBA firmware across all ESX hosts

7 Is the ESX running the latest BIOS (avoid conflict with HBA drivers) 8. Contact your SAN vendor for information on SP timeout values and performance settings and storage array firmware..

9. Turn off 3rd party agents (i.e. storage agents) ...

10. MSCS rdms (active node holds permanent reservation)

11. Ensure correct Host Mode setting on the SAN array

12. LUNS removed from the system without rescanning can appear as locked.

The Storage Processor fails to release the reservation, either the request didn’t come through (hardware, firmware, pathing problems) or 3rd party apps. running on service console didn’t send release. Or busy VM operations still holding lock.

Some data Stolen from : http://kb.vmware.com/kb/1005009 Troubleshooting SCSI Reservation failures on Virtual Infrastructure 3 and 3.5

Some unanswered question:

This bring other question into my mind, so even if you have HA/DRS still you can have outage situation. Fault tolerance ? how can that help if you messed up routing ? what someone pulled the HBA cable itself ? HA will occur if you have host failure or Service Console down. If you don’t have either of them then HA event will not occur. So we need to plan storage level HA, why don’t VMware provide abilities to take care at storage level HA as well? Yes I know since it involve multiple vendor, but can’t VMware work with them?

Friday, December 4, 2009

NFS: 898: RPC error 13 (RPC was aborted due to timeout) trying to get port for Mount Program (100005)

I was trying to mount NFS datastore on vSphare 4.0 from my netapp sim 7.3 version and I am getting following error message while mounting under vmkernel

WARNING: NFS: 898: RPC error 13 (RPC was aborted due to timeout) trying to get port for Mount Program (100005) Version (3) Protocol (TCP) on Server (x.x.101.124)

[root@xxx ~]# esxcfg-route

VMkernel default gateway is x.x.100.1

[root@xxx ~]# vmkping -D

PING x.x.100.140 (x.x.100.140): 56 data bytes

64 bytes from x.x.100.140: icmp_seq=0 ttl=64 time=0.434 ms

64 bytes from x.x.100.140: icmp_seq=1 ttl=64 time=0.049 ms

64 bytes from x.x.100.140: icmp_seq=2 ttl=64 time=0.046 ms

--- x.x.100.140 ping statistics ---

3 packets transmitted, 3 packets received, 0% packet loss round-trip min/avg/max = 0.046/0.176/0.434 ms PING x.x.100.1 (x.x.100.1): 56 data bytes

64 bytes from x.x.100.1: icmp_seq=0 ttl=255 time=0.999 ms

64 bytes from x.x.100.1: icmp_seq=1 ttl=255 time=0.837 ms

64 bytes from x.x.100.1: icmp_seq=2 ttl=255 time=0.763 ms

--- x.x.100.1 ping statistics ---

3 packets transmitted, 3 packets received, 0% packet loss round-trip min/avg/max = 0.763/0.866/0.999 ms [root@xxx]# ping x.x.101.124 PING x.x.101.124 (10.1.101.124) 56(84) bytes of data.

64 bytes from x.1.101.124: icmp_seq=1 ttl=254 time=0.507 ms

64 bytes from x.1.101.124: icmp_seq=2 ttl=254 time=0.554 ms

64 bytes from x.1.101.124: icmp_seq=3 ttl=254 time=0.644 ms

--- x.1.101.124 ping statistics ---

3 packets transmitted, 3 received, 0% packet loss, time 1999ms rtt min/avg/max/mdev = 0.507/0.568/0.644/0.060 ms

netappsim1> exportfs

/vol/vol0/home -sec=sys,rw,nosuid

/vol/vol0 -sec=sys,rw,anon=0,nosuid

/vol/vol1 -sec=sys,rw,root=x.x.130.17

Well there are few guidelines which should be followed while mounting NFS datastore on ESX host.

Here the list of requirement

1. Only root should have access to the NFS volume.

2. Only one ESX host should be able to mount NFS datastore.

How do we approach to achieve this ?

1. Create a volume on NetAPP filer choosing one of the aggregate. Once the volume is create we need to export the volume.

2. This can be done from CLI and to do it from CLI

srm-protect> exportfs -p rw= 10.21.64.34,root=10.21.64.34 /vol/vol2

srm-protect> exportfs

/vol/vol0/home -sec=sys,rw,nosuid

/vol/vol0 -sec=sys,rw,anon=0,nosuid

/vol/vol1 -sec=sys,rw=10.21.64.34,root=10.21.64.34

Here something to remember that the IP address 10.21.64.34 is of VMKERNAL which should be created before mounting NFS volume over ESX host.

While creating VMKERNEL ensure that IP should be in same subnet that’s of NFS server or else you will have error above. After changing IP subnet I was able to mount the NFS datastore

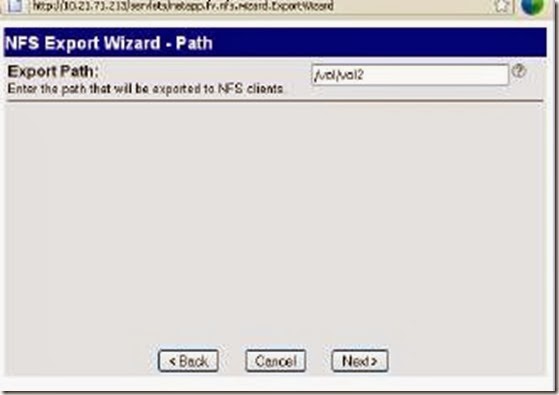

3. From “Filer view ” NFS > Manage Exports:

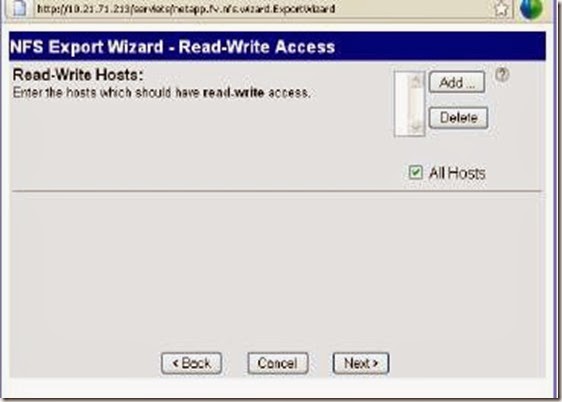

In the Export Options window, the Read-Write Access and Security

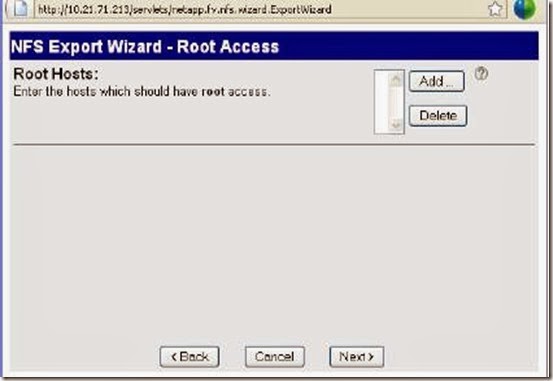

check boxes are already selected. You will need to also select the Root Access

check box as shown here. Then click Next:

Leave the Export Path at the default setting:

In the Read-Write Hosts window, click on Add to explicitly add a RW host.Here you need to mention IP address of VMKERNEL and not service console of the ESX host or else the rights will not be applied .

Populate the Host to Add with the (VMkernel) IP address:

The Read-Write Hosts window should now include my VMkernel I

address. Click Next to move to the Root Hosts window:

Populate the Root Hosts exactly the same way as the Read-Write

Hosts by clicking on the Add button. This should again be the VMkernel/IP

Storage IP address. When this is populated, click Next:

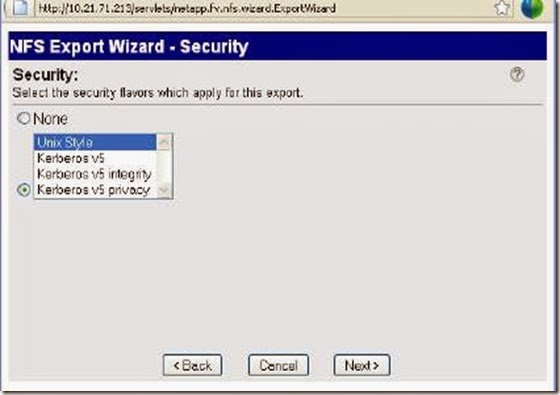

At the Security screen, leave the security flavour at the default of

Unix Style and click Next to continue: