1. We were having issue with one of the esxh host which had 3 VM’s with multiple RDM lun on it. Host was running fine but VM were getting BSOD with following error message.

Host was running fine but vmkernel had following message

vmkernel: 0:00:55:23.452 cpu4:1066)LinSCSI: 3201: Abort failed for cmd with serial=0, status=bad0001, retval=bad0001

Mar 25 22:01:57 xxx vmkernel: 0:00:55:23.458 cpu4:1066)WARNING: ScsiPath: 3802: Set retry timeout for failed TaskMgmt abort for CmdSN 0x0, status Failure, path vmhba1:C0:T0:L2

Mar 25 22:02:37 xxxx vmkernel: 0:00:56:03.465 cpu4:1066)LinSCSI: 3201: Abort failed for cmd with serial=0, status=bad0001, retval=bad0001

Mar 25 22:02:37 xxx vmkernel: 0:00:56:03.471 cpu4:1066)WARNING: ScsiPath: 3802: Set retry timeout for failed TaskMgmt abort for CmdSN 0x

0, status Failure, path vmhba1:C0:T0:L2

Mar 25 22:02:41 xxxx vmkernel: 0:00:56:06.931 cpu4:1062)VSCSI: 3183: Retry 0 on handle 8202 still in progress after 62 seconds

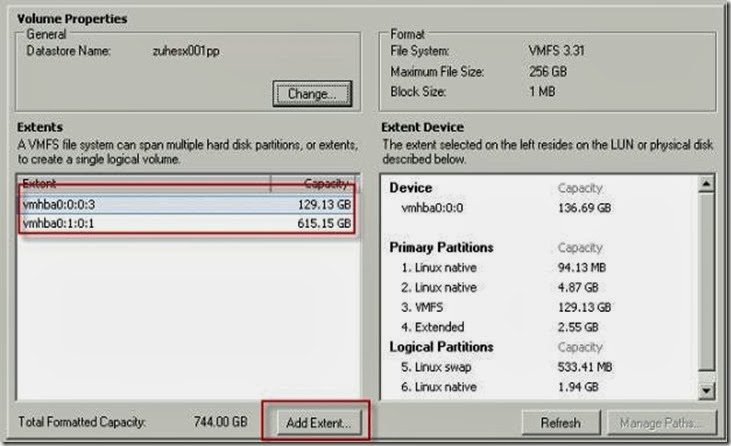

2. We tried to find out which lun it was . We then use SCSI HBA tool to find out lun . This will show all the vmfs partition. Since all the lun were configured as RDM hence we were not able to find any

[root@xxxx log]# esxcfg-vmhbadevs -m

vmhba0:0:0:3 /dev/cciss/c0d0p3 496a5f4e-dda2c50a-1326-00237d5adda0

3. Then w e were trying to find out which all managed path for the luns

Disk vmhba3:0:6 /dev/sdf (25600MB) has 1 paths and policy of Fixed

iScsi 26:1.1 iqn.2000-04.com.qlogic:qle4062c.lfc0908h85049.1<->iqn.1992-08.com.netapp:sn.xxx vmhba3:0:6 On active preferred

Disk vmhba3:0:5 /dev/sde (71687MB) has 1 paths and policy of Fixed

iScsi 26:1.1 iqn.2000-04.com.qlogic:qle4062c.lfc0908h85049.1<->iqn.1992-08.com.netapp:sn.xxx vmhba3:0:5 On active preferred

Disk vmhba3:0:10 /dev/sdj (5120MB) has 1 paths and policy of Fixed

iScsi 26:1.1 iqn.2000-04.com.qlogic:qle4062c.lfc0908h85049.1<->iqn.1992-08.com.netapp:sn.xxx vmhba3:0:10 On active preferred

Disk vmhba3:0:16 /dev/sdp (1399988MB) has 1 paths and policy of Fixed

iScsi 26:1.1 iqn.2000-04.com.qlogic:qle4062c.lfc0908h85049.1<->iqn.1992-08.com.netapp:sn.xxx vmhba3:0:16 On active preferred

4. We then looked at location /proc/scsi/ location and read the file called scsi file

If you look at the vmkernel error message above there it is mentioning vmhba name with lun number . To clarify more scsi file would be very help. This give NetAPP version which is running and also provide what kind of access it has

Host: scsi3 Channel: 00 Id: 00 Lun: 01

Vendor: NETAPP Model: LUN Rev: 7310

Type: Direct-Access ANSI SCSI revision: 04

Host: scsi3 Channel: 00 Id: 00 Lun: 02

Vendor: NETAPP Model: LUN Rev: 7310

Type: Direct-Access ANSI SCSI revision: 04

Host: scsi3 Channel: 00 Id: 00 Lun: 03

Vendor: NETAPP Model: LUN Rev: 7310

Type: Direct-Access ANSI SCSI revision: 04

Host: scsi3 Channel: 00 Id: 00 Lun: 04

Vendor: NETAPP Model: LUN Rev: 7310

Type: Direct-Access ANSI SCSI revision: 04

Host: scsi3 Channel: 00 Id: 00 Lun: 05

Vendor: NETAPP Model: LUN Rev: 7310

Type: Direct-Access ANSI SCSI revision: 04

5. We also checked following location for HBA F/W version

cd /proc/scsi/qla4022/

[root@xxx]# ls

1 2 3 4 HbaApiNode

6. We also suspected hpsim which was installed as my earlier post and finally we uninstalled it . Go to hpmgmt folder and run ./ installvm811.sh --uninstall

[root@xzxxx qla4022]# esxupdate -l query

Installed software bundles:

------ Name ------ --- Install Date --- --- Summary ---

3.5.0-64607 20:40:59 12/31/08 Full bundle of ESX 3.5.0-64607

ESX350-200802303-SG 20:41:00 12/31/08 util-linux security update

ESX350-200802408-SG 20:41:00 12/31/08 Security Updates to the Python Package.

ESX350-200803212-UG 20:41:00 12/31/08 Update VMware qla4010/qla4022 drivers

ESX350-200803213-UG 20:41:00 12/31/08 Driver Versioning Method Changes

ESX350-200803214-UG 20:41:01 12/31/08 Update to Third Party Code Libraries

ESX350-200804405-BG 20:41:01 12/31/08 Update to VMware-esx-drivers-scsi-megara

ESX350-200805504-SG 20:41:01 12/31/08 Security Update to Cyrus SASL

ESX350-200805505-SG 20:41:01 12/31/08 Security Update to unzip

ESX350-200805506-SG 20:41:01 12/31/08 Security Update to Tcl/Tk

ESX350-200808206-UG 20:41:02 12/31/08 Update to vmware-hwdata

7. We then checked console of the ESX host and press Alt+F12

After doing all the above we decided to swap the HBA cable. Currently HBA was directly plugged into FAS 2020 using QLE4032 dual port. We changed to different HBA and that seems to fix problem. During the course of troubleshooting VMware told us that we can not have 2 dual port QLE4032 as officially one is supported. I was surprised when they share configure MAX as well. I told them that this might be honest mistake in part of statement . Lets see what VMware has to say